|

AISpace2 | Main Tools | News | Downloads | Prototype Tools | Customizable Applets | Practice Exercises | Help | About AIspace |

|

|

Tutorials

Belief and Decision Networks

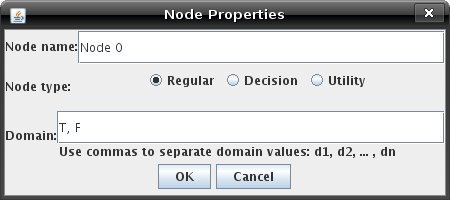

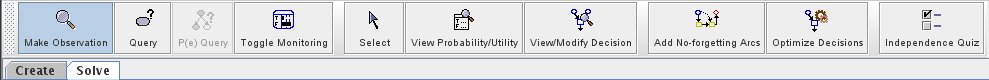

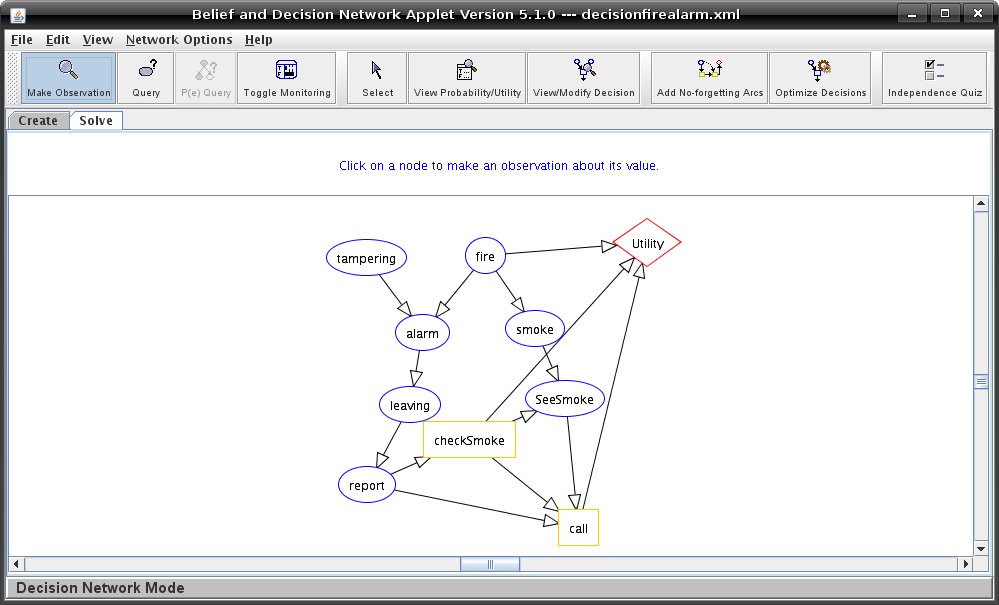

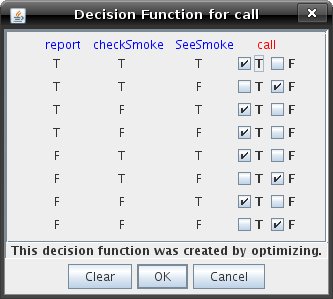

Tutorial Six (Supplementary): Decision NetworksBy now you should be familiar with graph creation and querying with belief networks. Creating a decision problem is not much more difficult. First, go to the 'Network Options' menu and select 'Decision Network Mode' from 'Belief/Decision Modes'. You should see the options change slightly, and 'Solve' mode will have some buttons enabled that were not available in 'Belief Network Mode' pertaining to decision nodes. Note that if you are loading a decision network sample graph, the applet switches to 'Decision Network Mode' automatically. Try creating a node somewhere on the graph. The Node Properties dialog will now allow you to set the node type by selecting the appropriate radio button next to 'Node type.' By default, nodes are created as regular nodes. Notice that when you select 'Utility' as the type, you can no longer enter any domain information for the node. This is because utility nodes only have a single domain element. You can only create one utility node in the graph.  Also notice that you can change the utility table for a utility node just as you can change the probability table for a regular node. Decision nodes have no probability tables, but you will have the chance to modify the decision functions later on. Create a decision network of your own if you like, or load an existing decision problem to query. Then switch to 'Solve' mode. The solve toolbar will now look like the figure below:  The first thing to do with your decisions is to ensure that all decision nodes relevant to the utility node are ordered. This means that there should be a path from one of them to the utility node that goes through each of them exactly once. Once this is done, click the 'Add no-forgetting arcs' button. You can only optimize decisions after all no-forgetting arcs are present. This has already been done in the pre-existing problems, but you can go back to 'Create' mode to delete some edges and see what happens. No-forgetting arcs connect nodes that share information; if information is known before a decision it will be known before all following decisions. Below is the Fire Alarm Decision Problem:  You can now optimize the decisions, creating the optimal policy. The Prompt dialog will appear when you click the 'Optimize Decisions' button allowing you to select Brief or Verbose Mode. In Brief Mode, you will be shown the expected value for that policy. If you optimize the decisions when in Verbose Query Mode, you will be able to see the variable elimination that gives you the information needed to maximize the decisions. This policy is optimal, based on the observations currently in the network, but if you would like to see what effect changing the policy would have, click 'View/Modify Decision' and then click on a decision node. You will see a window like the one below, which was produced by inspecting the decision function for the variable call.  Change as many of the values as you like, click 'OK', and then query the utility node. You might see a different expected utility - if the expected utility has changed, it will be lower than the expected utility that was there before. You can also now query the decision nodes by pressing the "Query" button and selecting a decision node to see how likely it will be that the agent will make a particular decision. Notice that this probability is based on the decision function and the probabilities of the decision node's parents. Finally, there are a few restrictions in place for decision networks. First, there may only be one utility node. Also, the utility of that node and the probabilities of any nodes which have decision nodes as parents are undefined until the decision functions for their parents are defined. A final restriction is that a decision may not be optimized if one of its parents has an observed value. A decision function defines the agent's actions for any context. Thus, if some decisions are observed or have observed parents, you will not be able to optimize them. It cannot find a decision function for any context because it is limited by its parent's observed value. |

| Main Tools: Graph Searching | Consistency for CSP | SLS for CSP | Deduction | Belief and Decision Networks | Decision Trees | Neural Networks | STRIPS to CSP |